Abstract

How can artificial agents learn to solve many diverse tasks in complex visual environments without any supervision? We decompose this question into two challenges: discovering new goals and learning to reliably achieve them. Our proposed agent, Latent Explorer Achiever (LEXA), addresses both challenges by learning a world model from image inputs and using it to train an explorer and an achiever policy via imagined rollouts. Unlike prior methods that explore by reaching previously visited states, the explorer plans to discover unseen surprising states through foresight, which are then used as diverse targets for the achiever to practice. After the unsupervised phase, LEXA solves tasks specified as goal images zero-shot without any additional learning. LEXA substantially outperforms previous approaches to unsupervised goal reaching, both on prior benchmarks and on a new challenging benchmark with 40 test tasks spanning across four robotic manipulation and locomotion domains. LEXA further achieves goals that require interacting with multiple objects in sequence.

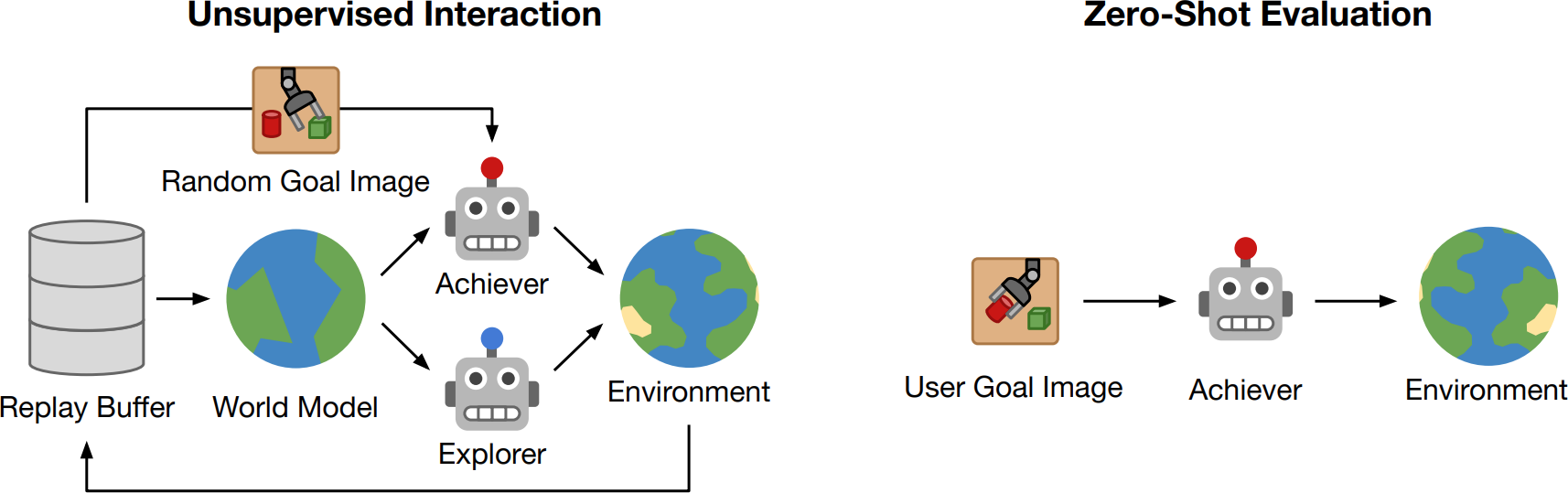

Method

LEXA explores the world and learns to solve arbitrary goal images purely from pixels and without any form of supervision. After the unsupervised interaction phase, LEXA solves complex tasks by reaching user-specified goal images.

RogoYoga

These goal images require the agent to reach and maintain diverse poses.

RoboBins

These goal images require picking and placing two blocks after another.

Kitchen

These challenging goal images require the agent to perform 3 tasks after another.