Hi, I’m Danijar!

I’m a Staff Research Scientist at Google DeepMind in San Francisco. My research aims at building generally intelligent machines that understand and interact with the world, focusing on these key questions:

-

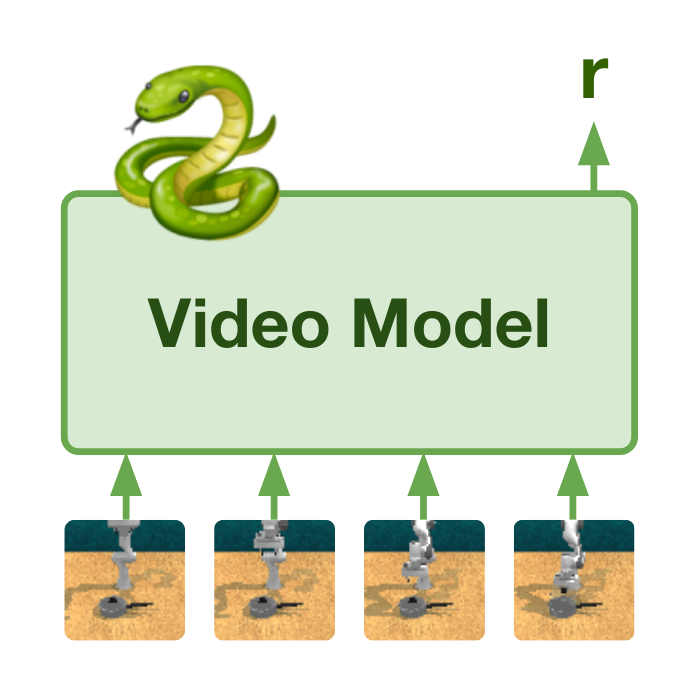

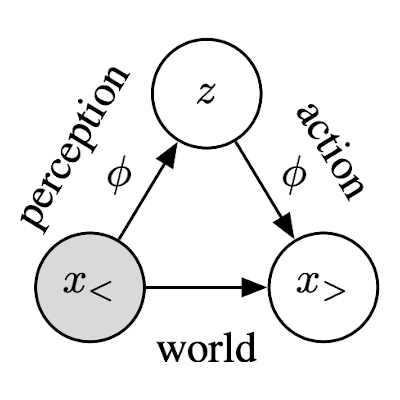

World Models Learning powerful predictive models that equip AI with a deep understanding of the physical world and enable planning by imagining the future.

Key papers: Dreamer 4, Dreamer 3, DayDreamer, PlaNet, TECO, Dynalang -

Temporal Abstraction Achieving long-term tasks by breaking them down into subgoals that enable abstract planning and are realized through low-level actions.

Key papers: Director, Latent Skill Planning, Clockwork VAE -

Scalable Objectives Designing objectives for AI to self-improve beyond human input, such as by autonomously exploring and practicing open ended goals.

Key papers: Action Perception Divergence, Plan2Explore, LEXA, VIPER

My PhD thesis: Embodied Intelligence Through World Models

Featured Media

See YouTube for more videos.

-

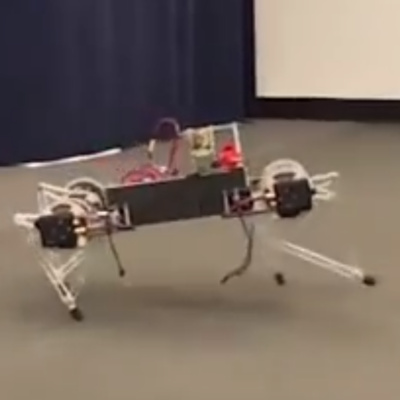

Learning to Walk in the Real World in 1 Hour

-

AI Masters Minecraft

-

Will We Know AGI When We See It?

-

Interview in Daily Mail

-

Interview in MIT Technology Review

-

Lab Spotlight in Tech Crunch

-

Unsupervised Intelligent Agents Technical Talk

-

The Future of AI and World Models

-

Talk RL Podcast

-

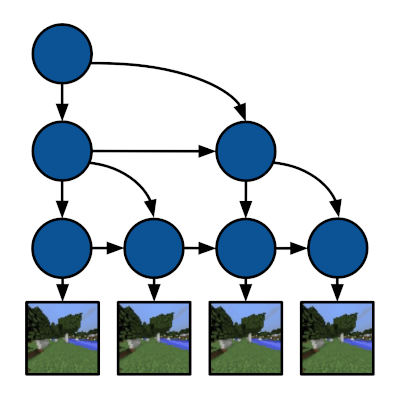

Deep Hierarchical Planning from Pixels

-

Introducing Plan2Explore

-

Google's PlaNet AI Learns Planning from Pixels

Highlighted Work

See Google Scholar for more publications.

-

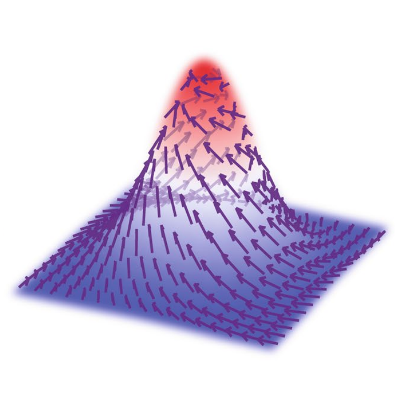

One Step Diffusion via Shortcut Models

ICLR 2025 (oral, 1.5%)

-

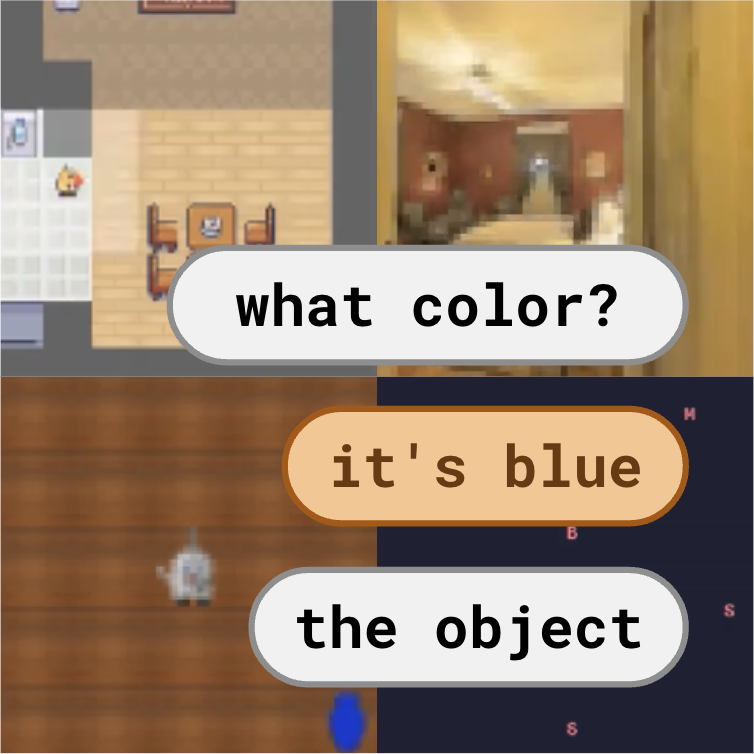

Learning to Model the World with Language

ICML 2024 (oral)

-

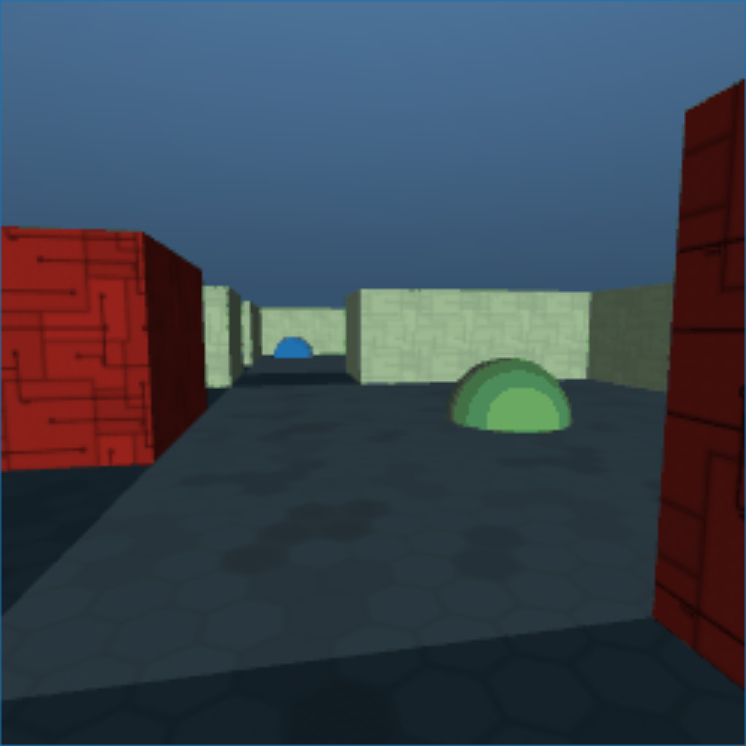

Evaluating Long-Term Memory in 3D Mazes

ICLR 2023

-

Deep Hierarchical Planning from Pixels

NeurIPS 2022

-

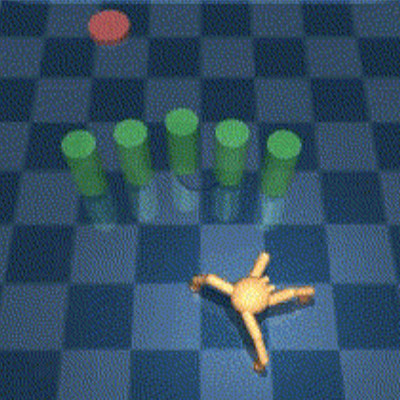

Discovering and Achieving Goals via World Models

NeurIPS 2021 (26%), URL 2021 (oral), SSL 2021 (oral)

-

Benchmarking the Spectrum of Agent Capabilities

ICLR 2022, DRLW 2021 (oral)

-

Clockwork Variational Autoencoders

NeurIPS 2021 (26%)

-

Latent Skill Planning for Exploration and Transfer

ICLR 2021 (28%)

-

Mastering Atari with Discrete World Models

ICLR 2021 (28%)

-

Planning to Explore via Self-Supervised World Models

ICML 2020 (22%)

-

Dream to Control: Learning Behaviors by Latent Imagination

ICLR 2020 (oral, 4%), DRLW 2019 (oral)

-

A Deep Learning Framework for Neuroscience

Nature Neuroscience

-

Noise Contrastive Priors for Functional Uncertainty

UAI 2019 (26%)

-

Learning Latent Dynamics for Planning from Pixels

ICML 2019 (23%)

-

Sample-Efficient Reinforcement Learning with Stochastic Ensemble Value Expansion

NeurIPS 2018 (oral, 0.6%)

-

Sim-to-Real: Learning Agile Locomotion For Quadruped Robots

RSS 2018 (31%)

Short Biography

Danijar Hafner is a Staff Research Scientist at Google DeepMind. He received his PhD at the University of Toronto with Jimmy Ba, was a visiting student at UC Berkeley with Pieter Abbeel, interned at Google Brain for 6 years, and has been named a Vanier Scholar. He completed his MRes at UCL and the Gatsby Unit with Tim Lillicrap and Karl Friston. Danijar’s research aims at building generally intelligent machines that understand and interact with the world.